Packages or Containers?

10 Oct 2022 - Giulio Vian - ~5 Minutes

In a previous article I considered the principle that DevOps collects from Lean, namely the importance of minimizing the size of releases. In Lean we talk about small batches, which for software means reducing as much as possible the amount of changes present in a release. With some simple examples I hope to have demonstrated the advantages that are derived from them and how automation is a consequence of minimizing the weight of our releases.

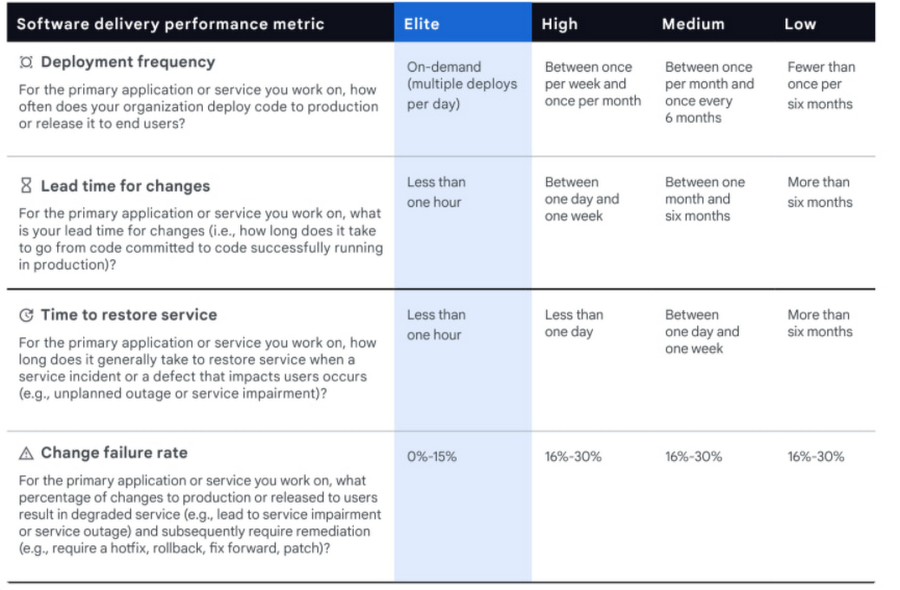

Today we return to the topic because the results in Accelerate teach us that organizations with best performances have very high values for Deployment Frequency, the frequency of releases, up to several releases per day (p. 19).

While this is undeniable for an organization as a whole, the Deployment Frequency metric must be viewed differently when we go down to the level of a single application. The update frequency will depend on both the maturity of the application and its strategic importance. These factors compound and mediate when we have an IT portfolio with many internally developed applications.

Maturity

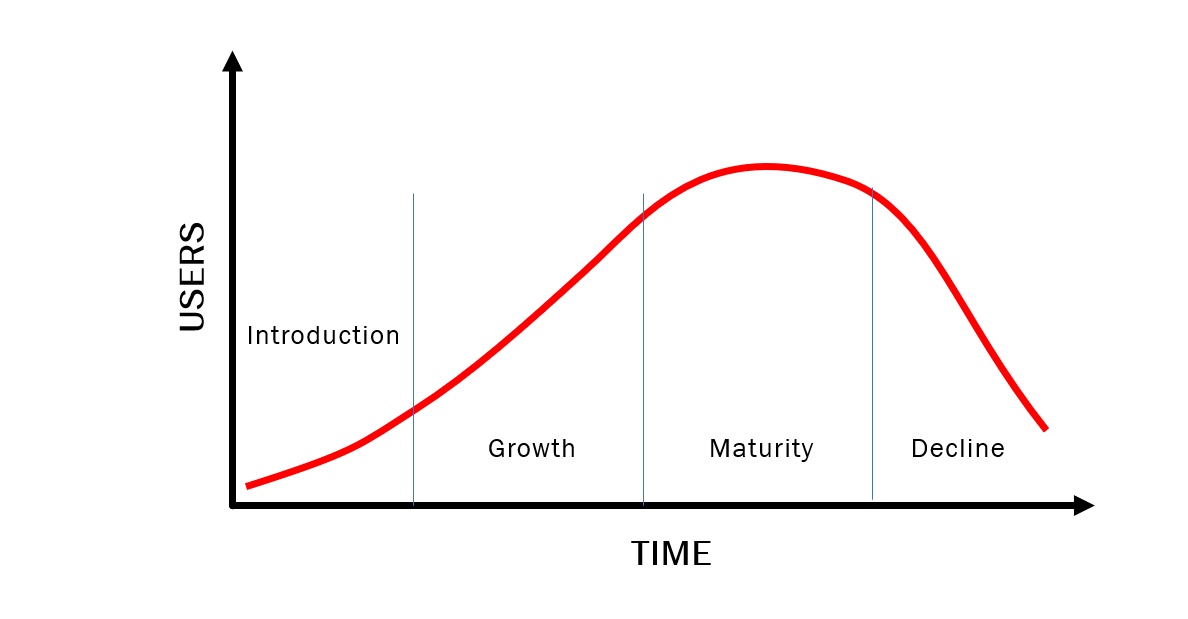

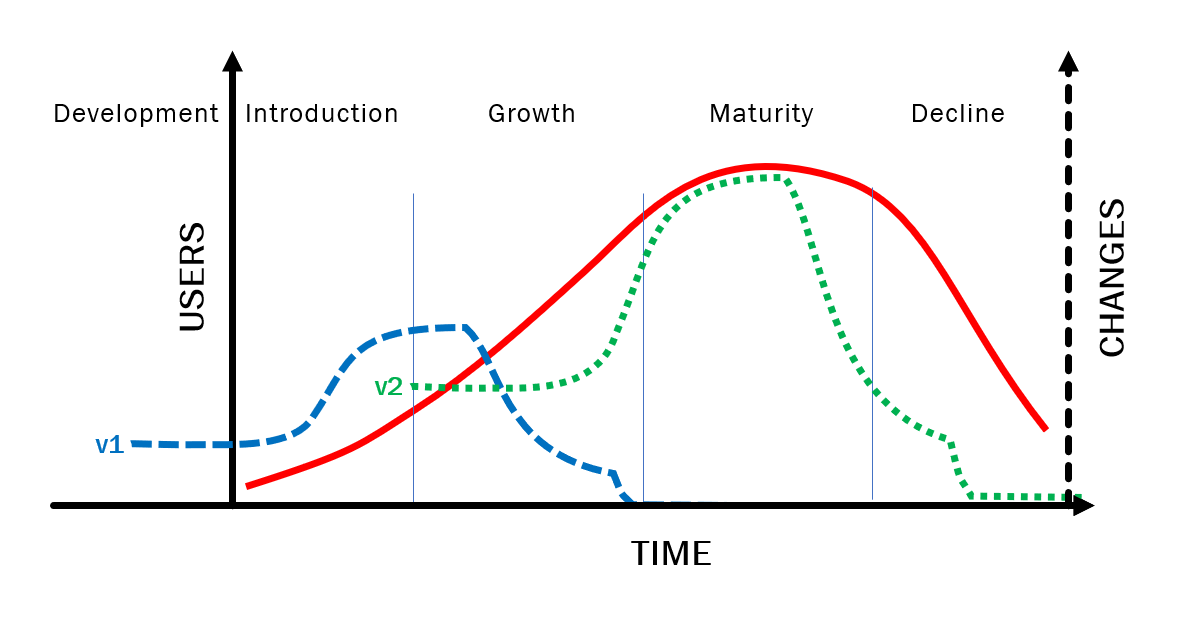

Each application, IT sub-system, system asset, or whatever you want to call it has a specific life cycle, variously divided into four, five or more phases (e.g.: Purchase, Release, Use, Maintenance, Withdrawal and Disposal).

Consider systems developed internally, applications. For these apps there are typically four stages:

- Development

- Growth

- Maturity

- Decline

Let’s start with the financial perspective for a single application or product or service. The financial perspective focuses on use or revenue. The usage curve we expect is that typical of a product, with a phase of intense growth after the initial advertising, a stabilization and finally a decline until the final withdrawal.

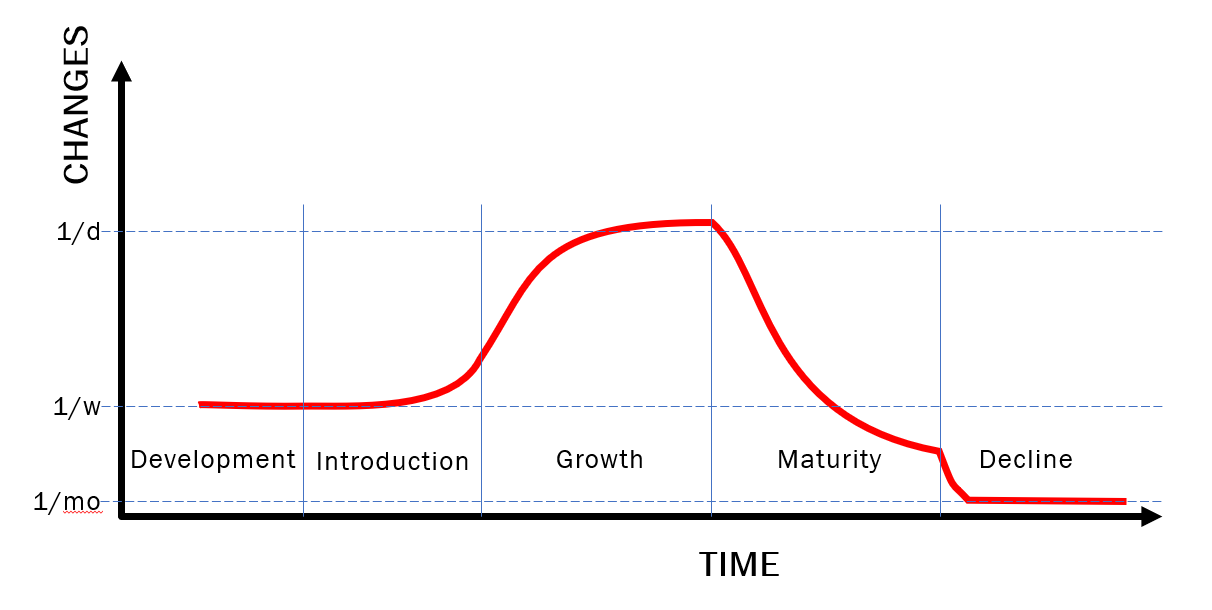

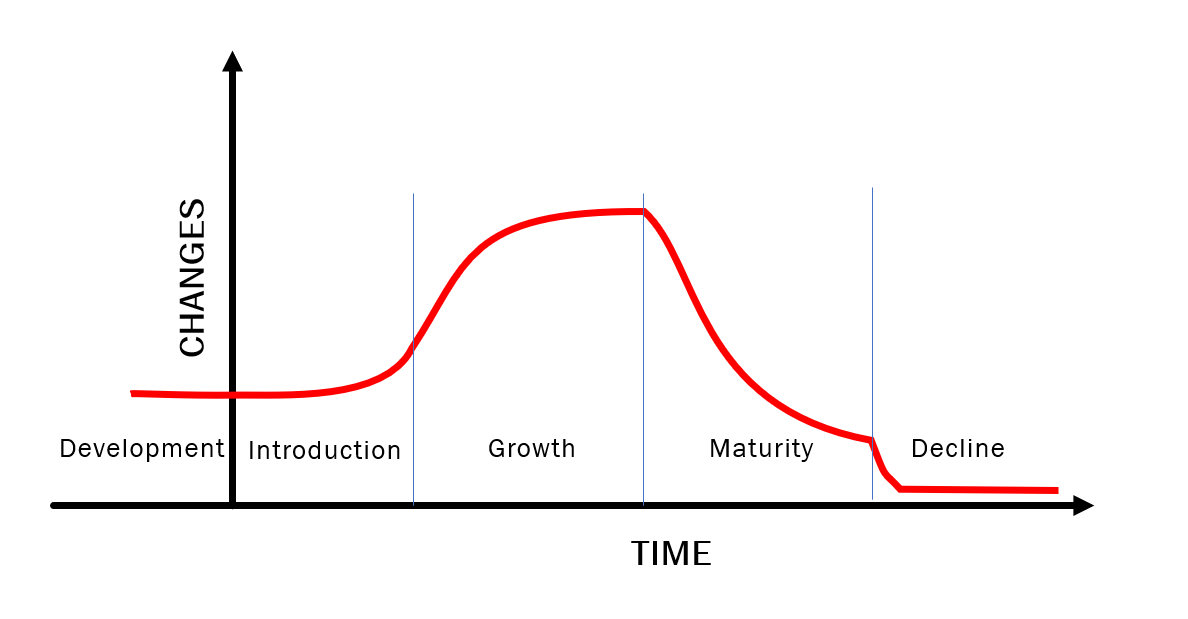

We now come to the perspective of engineering development. Here we are concerned with the frequency with which we introduce changes in the aforementioned applications.

A typical pattern sees an almost constant investment phase until the product or service takes hold and it is possible to increase investments, giving greater value to customers. Once the product has matured, the investment and therefore the rate of change falls quite rapidly: it is the phase in which investors begin to collect their return. The number of novelties decreases and ordinary maintenance becomes the main activity. When it is understood that the market will no longer give significant returns for that product or service, all that remains is maintenance activities for an increasingly waning user base, until the final withdrawal of the product.

This is a simplified reasoning, of course. If the application sees exponential growth, it is not uncommon for the initial design to fail withstanding the rate of change or the increasing load. When the organization, based on technical evidence, decides for a radical redesign, we have the replacement of a product with a new one.

The migration of Twitter from Ruby to Scala is among the best known examples of a rewrite of a product to adapt it to growth (see Twitter Shifting More Code to JVM, Citing Performance and Encapsulation As Primary Drivers (infoq.com) and Twitter Engineering: Twitter Search is Now 3x Faster ). Another example is Facebook Messenger ( Project LightSpeed: Rewriting Messenger to be faster, smaller, and simpler ).

In summary: the amount and frequency of changes for an application varies over time, declining as it reaches maturity.

Strategical

The surest thing about the future is its unpredictability. Quite obvious, isn’t it? In our case we have to think how the strategic importance of an application affects its evolution.

In the Maturity section, the usage graph appears well defined, but will only adhere to reality when the application has ended its existence! During the initial stages, one can only make assumptions about how the curve will evolve. In response to market conditions, user requests, management’s strategic choices, the latter may decide to invest more or remove resources until it decides, for example, to replace a home-made solution with a commercial one (buy vs make). Anyone interested in learning more about the topic of calibrating the IT investment I recommend studying a tool like Wardley Maps .

We therefore have two elements that contribute to increasing or decreasing the development work and consequently the amount of changes available over time to be released in production: maturity and strategicity.

Deployments

Some of you may have already jumped to conclusions of how the two elements, maturity and strategicity, of a product affect releases, but let’s proceed in order.

Clearly the greater the number of people who contribute to a product, an application, the greater the amount of changes produced in the unit of time.

The reverse is also true: the fewer people work, the fewer changes are produced. This is particularly when the product starts to decline: changes become infrequent, a handful a year, with no new features, only fixes and adjustments.

This simple finding has a direct impact on the release rate: if there are no changes available, what can I release?

Conclusion

In summary, the Deployment Frequency metric only makes sense at the level of an organization with an application portfolio. It cannot be considered at the level of a single application unless you factor in the maturity and investment on it, which means using a different metric.

In fact, the true Lean approach requires the ability to deliver on demand, whenever necessary or useful. Hence the need for automation even for those less obvious cases, as I mentioned in Automate? Always! .

In a future article, I will address the issue of non-functional releases of an application and the increasing importance they have in modern computing.

What do you think? Am I wrong?